Easily Get Working Proxies List with Forum Proxy Leecher

Proxies has been around for many years and is still being used a lot today. The only reason why someone would want to use a proxy is to mask their IP address but sacrificing the speed and stability of their Internet connection. Another problem with proxies are they don’t usually last a long time and they may not be necessarily highly anonymous which may leak some of your information out. Other than that, proxy list are also often being used in software that does scraping. For example, if you try to scrape Google with your single IP address, Google will eventually detect it and block your request by asking you to answer the given CAPTCHA code. This restriction can be easily bypassed using a list of proxy and setting a timeout value.

Compiling a list of proxy addresses is a very tedious job if you do it manually. You will have to look up proxy websites and forums to compile the proxies into a list, remove the duplicates and test them for their speed, reliability, anonymity and status. Imagine doing that daily if you need a list of fresh proxies everyday. Fortunately there are software such as Forum Proxy Leecher that can automate the whole process and easily get you thousands of working proxies whenever you want.

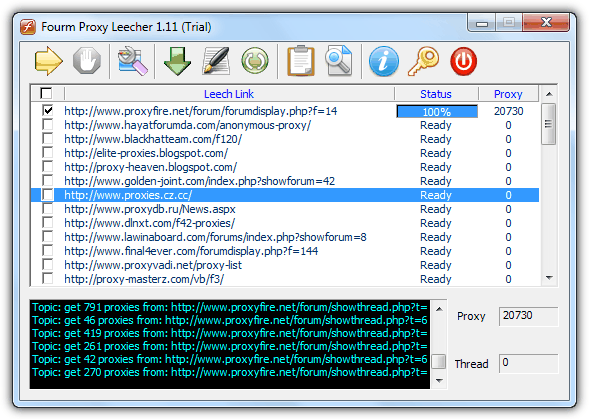

Forum Proxy Leecher has been around for many years and the development seems to have come to a halt. We have tested it on our Windows 7 operating system and amazingly it still works! Forum Proxy Leecher is a very powerful tool that can crawl into forum posts where it is a goldmine for proxies or even normal websites, scrape all the IP and port of the proxies, formatting them to the standard proxy list and automatically test them. Forum Proxy Leecher is actually a shareware that cost $99.95 but the free trial version doesn’t have a time limit. The only restrictions are unable to update the leech list automatically, scanning of 1 forum at one time and the program may secretly send the results to the developer.

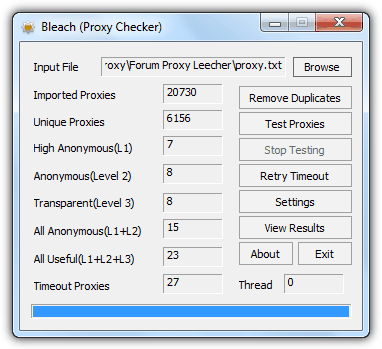

Forum Proxy Leecher is very easy to use. Just tick the checkboxes on the leech links that you want to crawl and click the Start leeching button. Once the link has been crawled, the built-in proxy testing tool called Bleach will automatically launch and start checking.

Although the leech list cannot be automatically updated, but we’ll show you how to manually update the it by following the few simple steps below:

1. Visit http://fpl.my-proxy.com/forumlist.txt and copy everything from that page by pressing Ctrl+A to select all and Ctrl+C to copy.

2. Click the “Edit the leech lists” fifth button at Forum Proxy Leecher. A forumlist.txt file will be automatically opened with Notepad, press Ctrl+A to select all and then Ctrl+V to paste. Save the text file.

3. In Forum Proxy Leecher program, click the “Reload the leech lists” sixth button to refresh the leech links.

Now you have the latest URLs to crawl and scrape the proxies. The only inconvenience now is the ability to crawl only one URL at a time but that will still save you a lot of time and effort. The 1st leech link alone can already generate more than 20,000 proxies!

That kind of getting proxies newer rely work !!!